Senior Product Designer

// FINTECH, ENTERPRISE SAAS

& AI WORKFLOWS

I help product teams define how complex products behave before engineering starts building.

Most requirements work until you introduce multiple user roles, approval chains, integrations, missing data, exception paths, or AI-generated outputs. That's where teams slow down.

I work with Fintech, Enterprise SaaS, and AI product teams to turn that complexity into clear, buildable product behavior that engineering can implement with confidence.

"Jake is self-motivated and proactive. He started adding value right out of the gate and was a pleasure to work with. I would welcome working with him again."

// How I Can Help Your Team

// THE PAST WORK / CASE STUDIES

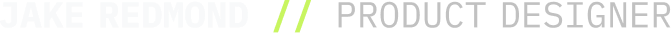

TD Bank: Enterprise Enrollment UX

The Work: Led the UX design for a high-stakes digital enrollment initiative targeting ~700,000 new users.

The Impact: I translated complex compliance rules and legacy API constraints into a seamless, modern user interface. By mapping the exact UX flows and edge cases, I aligned 8 disconnected teams around a single, buildable design—preventing massive engineering rework before development even began.

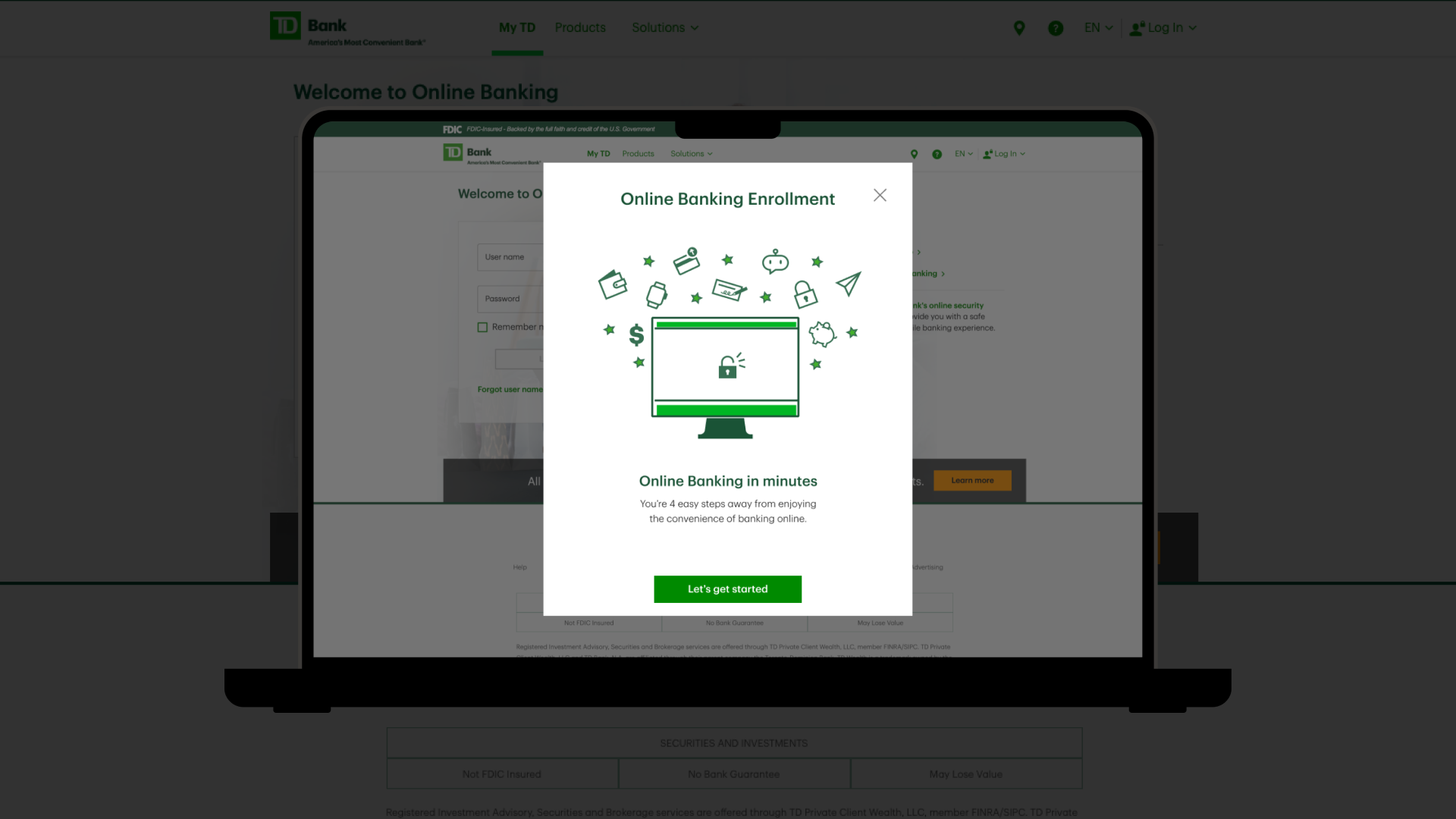

Invest Clearly: Onboarding Optimization

The Work: Redesigned a frustrating, compliance-heavy onboarding process into a progressive, relationship-first user experience.

The Impact: By restructuring the UI to reduce cognitive load and masking complex KYC requirements behind an intuitive step-by-step flow, the new design significantly reduced top-of-funnel drop-off and protected the business funnel.

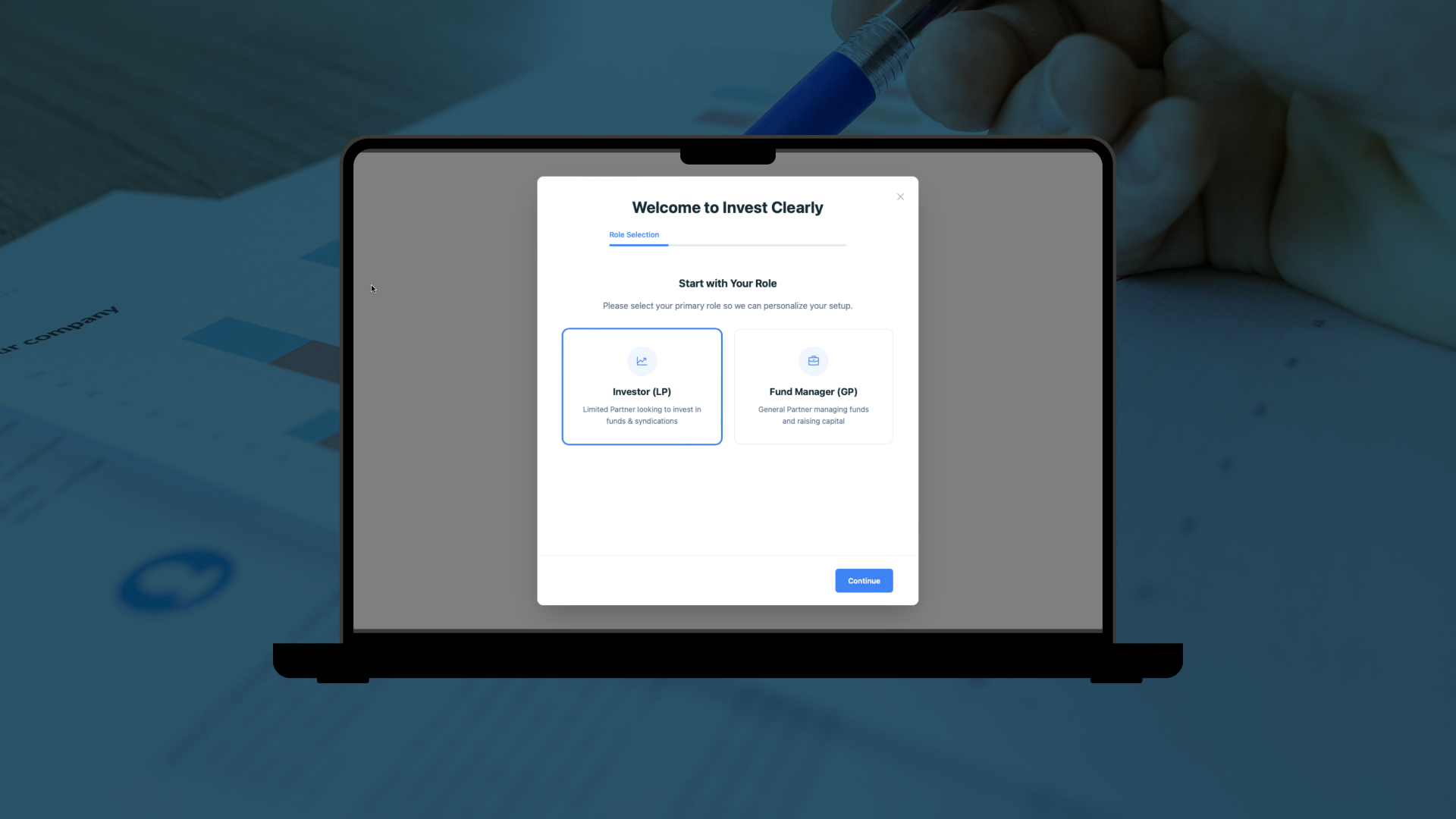

Project RELAY: AI Interaction Design

The Work: Designed and prototyped a voice-first AI task manager to eliminate the friction of household coordination.

The Impact: I built the complete UX—from defining the AI's natural language interaction model to designing and deploying the live React UI. By defining the interaction model, decision logic, and AI behavior before implementation began, the entire product was designed, built, and deployed in under 72 hours with no production defects.

// The Engineering Handoff Standard

Most implementation problems don't start in engineering. They start when teams haven't fully defined how the product should behave across different users, permissions, integrations, exceptions, and failure conditions. My job is making those decisions explicit before development begins so engineering receives specifications, not interpretation puzzles.

// Let’s unblock your engineering team

If your developers are playing product manager during implementation to fill in missing UX logic, that’s a handoff problem. Let’s fix it.